Artificial intelligence (AI) has increasingly been integrated into multiple stages of the research starting from literature searches, data management, statistical analysis, image processing, to language editing. It provided a lot of opportunities to research fraternity in terms of writing, removing language barriers, financial constraints for research. Generative AI can boost research, but its use requires transparency and responsibility.

In current era of AI, the major point for discussion for research community is use of AI in research writing. Despite a lot of advancement, prompt structuring, there are some copyright and legal limitations which make AI unsuitable for writing. Authors should carefully review the terms and conditions, terms of use, or any other conditions or licenses associated with AI tools. Authors must confirm that the AI Technology does not claim ownership of their content or impose limitations on its use, as this could interfere with an author’s rights to use specific outputs in their manuscripts, the journal or an academic society’s rights.

Peer review continues at the heart of scholarly publication, ensuring scientific integrity, ethical norms, and academic reputation. Traditionally, it has served as a quality assurance process, depending on expert judgment to assess originality, technique, interpretation, and relevance. The peer review process now faces both new potential and previously unheard-of problems because of the swift growth of AI in research and article preparation. Journals and publishers are now receiving volumes of AI-assisted submissions that superficially looks indistinguishable from human work. The prose is clean, citations exist and the structure is sound. But reviewers who are dealing with such content in recent years have begun to detect a new class of failure modes; subtle, covert, and really detrimental in high-stakes contexts.

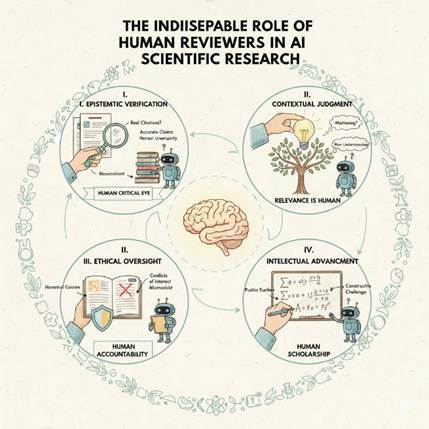

There is a significant contrast between automation and AI, and the two should not be utilized interchangeably. AI is about designing intelligent systems, computers, and software that can emulate human intellect and behavior. As a reviewer of scientific journals, we are also not detached from it. If we talk about review process, we can use AI for detection of language, grammatical errors and minor scientific contradiction. The COPE discussion recommends that if an editorial decision is made by AI that provides an outcome for an article, such as acceptance or rejection, the decision should directly involve an editor. Human oversight is crucial for protecting fairness and honoring authors’ rights during article evaluations.

Also, there are challenges with tools training for biases, since tools trained on historical datasets might require some corrections for biases. Reviewers should assure for biases and share any information for these tools to be updated by developers based on this feedback. Reviewers should be transparent about which of their publishing processes or workflows are automated, and where AI decisions are involved. Any AI powered automation should be clearly presented to the relevant participants of the peer review process—authors, reviewers along with clarification on how the algorithm provided the result or conclusion.

Despite these, the primary ethical challenges that have been raised by the academic community with relation to the use of AI for decision making in publishing systems are around the three core components of accountability, responsibility, and transparency.

Reviewers play key role in decision making of manuscripts, so a large responsibility is with them to follow various guidelines like AWME, COPE as well as journal guidelines for use of LLM and generative AI. There should be strict acknowledgment in the papers for use of any kind of AI tools, language editing software or proofreading software. It is also expected by reviewer panel to check whether the paper is written by generative AI tools or human written.

Hallucinated citations are the most documented problem. AI systems produce references that look real in terms of correct author name formats, plausible journal titles, coherent years but that do not exist in real world databases. A reviewer who does not verify citations independently will pass fabricated research into the record. In medicine, pharmaceutical and life science, this is not a theoretical concern.

Confident misrepresentation of nuance is subtler. A qualified specialist reading AI-generated information in their own subject often observes that the system has collapsed a contentious empirical discussion into a fake consensus or presented a preliminary discovery as if it were accepted science. The text is not wrong in any obvious sense; it is simply more certain than the evidence claims. Identifying this requires genuine expertise, not algorithmic screening.

Stale knowledge compounds both problems. AI models have training cutoffs. In fast-moving fields like virology, oncology, cardiology, machine learning itself, the gap between a model’s knowledge and current reality can be significant. Reviewers who know the current state of their field can easily identify these flaws which an AI system cannot.

Experienced reviewers in AI-saturated environments are increasingly verifying:

- If every cited source exists and defines what the text claims,

- If the level of certainty expressed matches the actual evidentiary base,

- That the analysis reflects developments post-dating common AI training cutoffs, and

- That domain-specific reasoning is substantively correct, not merely syntactically coherent.

This means investing in reviewer training that is specific to the AI era are not just “how to spot AI-written text” but how to evaluate claims in a landscape where the evidentiary floor has shifted. It means compensating and valuing review work in ways commensurate with its importance. And it means resisting the seductive efficiency argument that AI screening is “good enough” for high-stakes decisions about what counts as true, credible, and publishable.

There are a lot of tools which provide detailed word by word assessment for AI written content. Again, research cannot be performed by AI, it’s our responsibility to maintain accountability, research integrity, and responsibility. Reviewers are key stakeholders to maintain accountability.

Quality and integrity in the AI era do not arise from improved generation – they develop from better review.